At every company I've worked at, evals were everywhere. Precision, recall, NDCG, F1 — dashboards full of numbers that told you whether your model was "better." And look, they matter. I'm not saying throw them out. When you're building search or recommendation systems, you need some quantitative signal that you're not shipping garbage.

But here's the thing nobody wants to say out loud: your customers don't care about your evals.

No one has ever opened DoorDash and thought, "wow, the NDCG@10 on this restaurant ranking is really tight today." They open the app and either the food suggestions feel right or they don't. That's it. That's the entire evaluation.

Some products are just vibe-based. Food recommendation is a perfect example. What makes a good recommendation? It depends on the person, the time of day, their mood, what they ate yesterday, whether they're hungover, whether they're trying to impress someone. There is no ground truth. There is no labeled dataset that captures "this person is sad and wants soup."

This is the problem with evals as a north star: they measure what's measurable, not what matters. You can optimize a ranking model until your offline metrics are pristine and still ship something that feels off to the person holding the phone. Because the thing you're actually trying to optimize — subjective utility — is, almost by definition, impossible to eval. It's personal. It's contextual. It's emotional. You can approximate it. You can proxy it. But you cannot capture it in a test suite.

Now — I want to be fair. Evals work when the function is well-defined and objective. Code that compiles or doesn't. Math that's correct or isn't. Classification where there's an actual right answer. If you're building a spam filter or a fraud detection model, yes, go stare at your precision-recall curve. That's the right tool for a problem with a ground truth.

But most product experiences aren't like that. Most of the things that make someone love or abandon your app live in the subjective, squishy, unmeasurable space between "correct" and "good."

Here's a real example. A user searches "bars for asian girls." What does that mean? It could mean a cute cocktail bar with good lighting and a vibe that feels safe and welcoming for a group of Asian women going out. The system recommended sports bars with big TVs — which, honestly, are amazing recommendations if I'm out late with the boys. But that's not what this query is about. The context isn't just cultural — it's personal, situational, intersectional in ways that collapse the moment you try to systematize them. You'd need to understand who's searching, why they're searching, what their Friday night looks like, what "good bar" means in their world. No eval captures that.

Here's the thing that really got me: evals don't work when you haven't found product-market fit. They just don't. You're measuring the precision of something that might not even be the right thing to build. You're optimizing a system whose entire premise might be wrong. It's like obsessing over the aerodynamics of a car that doesn't have an engine yet.

And here's the irony that killed me: most of the actual decisions we made internally — the ones that did move the product — weren't driven by evals at all. They were vibes. Someone on the team would use the product and say, "this feels off." A PM would pull up a query and go, "why is this result fourth?" An engineer would demo something and the room would either light up or go quiet. That was the real eval. That was the signal that actually mattered.

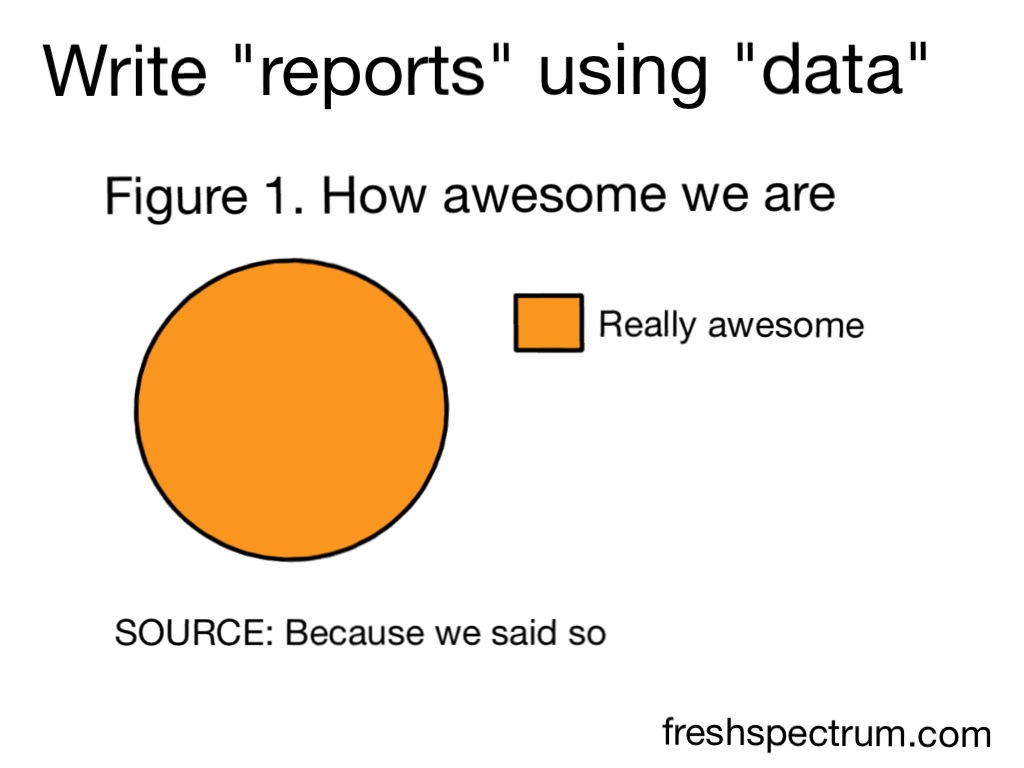

We had this whole eval infrastructure, these dashboards, these weekly metric reviews — and the real decision-making was a senior person squinting at the product and going, "nah, this isn't it." Which is taste. Which is the thing we refused to name because it doesn't fit in a spreadsheet. I presented eval results to the CEO, COO, and CTO. Precision up 3%, recall up 5%, some cherry-picked examples. Arrow goes up, everyone nods, meeting ends early. No one in that room was going to pull up the app and check whether the user felt the difference.

It's the same way musicians don't write songs by optimizing for metrics. They don't A/B test a chord progression. They don't run evals on a bridge. They just create — from intuition, from feel, from years of absorbed context that they couldn't explain to you if they tried. And then the song either hits or it doesn't. The audience is the eval, and the audience shows up after the thing is made.

Great products work the same way. Steve Jobs never ran evals on the iPhone. He didn't need a benchmark to tell him that putting the internet in someone's pocket would change everything. He just knew — the way a musician knows when the song is done. You create, you ship, you watch. The creation comes first. The measurement comes after — if it comes at all.

That's taste. And taste is what lets you ship fast at a startup.

I've watched teams spend weeks building eval harnesses when they could've shipped three iterations and learned more from real user behavior in a day. Evals give you a false sense of rigor. They let you sit in a Jupyter notebook and feel like you're making progress without ever putting something in front of a real person.

The best product engineers I've worked with — the ones who actually moved the needle — had good taste. They could look at a feature and know it wasn't right before any metric told them. They could feel the friction. They could anticipate what a user would find delightful vs. forgettable. And they shipped. Fast. Because they trusted their judgment instead of waiting for a dashboard to give them permission.

At a startup, you don't get to hide behind evals. You don't have six months to build a benchmark suite. You don't have a quarterly review to present pretty charts to. You have a product that either resonates or doesn't, and you have to know the difference before the data tells you — because by the time the data comes in, you're already dead.

That's why startups run on vibes. Not because vibes are unserious, but because they're the fastest signal you have. The customer is a person with a phone who's hungry and wants you to just get it. No eval on earth can tell you whether you did.